I’ve had my iPhone X for over a month now and have been overall very pleased with it. One of the unique features of this device is the controversial Notch. The protrusion onto the otherwise all-screen device can be considered unattractive but is necessary to house the hardware that enables Face ID and Animoji: the TrueDepth camera. And I have to say it works quite well. I am interested to see how this technology could impact our mobile experience in the future, so I started looking for use cases that were served by this technology in the past. I’d like to share some these use cases which could have a profound impact on interpersonal communication for the typical person, and some for people in not-so-typical circumstances.

It’s notable, however, that this technology is not new. These depth sensors were popularized by the gaming industry with Microsoft’s Kinect in November 2010. The potential of this technology was recognized instantly, and within a week drivers were available to interface with this hardware directly, opening up the world of depth to hobbyists and researchers. What is new is that it is native hardware on the mobile platform.

Related: iPhone X and the Future of Computing

Lytro Cinema’s Depth Screen

The first established use case of depth cameras I came across concerned YouTube personalities and live-streaming gamers. These streamers superimpose themselves over the content they are sharing, such as a video game. A traditional webcam portrays square video frames including everything in view: the person, their chair, the contents of the room behind them, etc. Everything in the video feed beyond the person served simply as a distraction and a waste of screen real estate. The historic solution to this problem was to use a green screen. Problem solved, no more background.

Now, with depth cameras, there is an alternate option. These cameras simply cut out anything past a certain distance using depth information. There are many consumer webcams available that offer this feature and Lytro is taking this technology to the big screen with its Lytro Cinema’s Depth Screen feature. But how could this translate to mobile? Most of us aren’t trying to stream games or make movies with an elaborate CGI environment. Yet there may be something to cutting out the distractions like the streamers have. It’s easy to imagine many environments where the background could be a huge distraction during a FaceTime call: busy coffee shops, airports, etc. Instead of seeing all the hustle and bustle behind a loved one, wouldn’t it be nice if you could FaceTime them and cut it all out? Furthermore, what if you could replace the background with a common scene so it appears as if you’re sitting at two ends of a table on a private patio. It seems that could facilitate a much more meaningful and intimate conversation by offering artificial calm and privacy in an otherwise chaotic and public environment.

Polygram

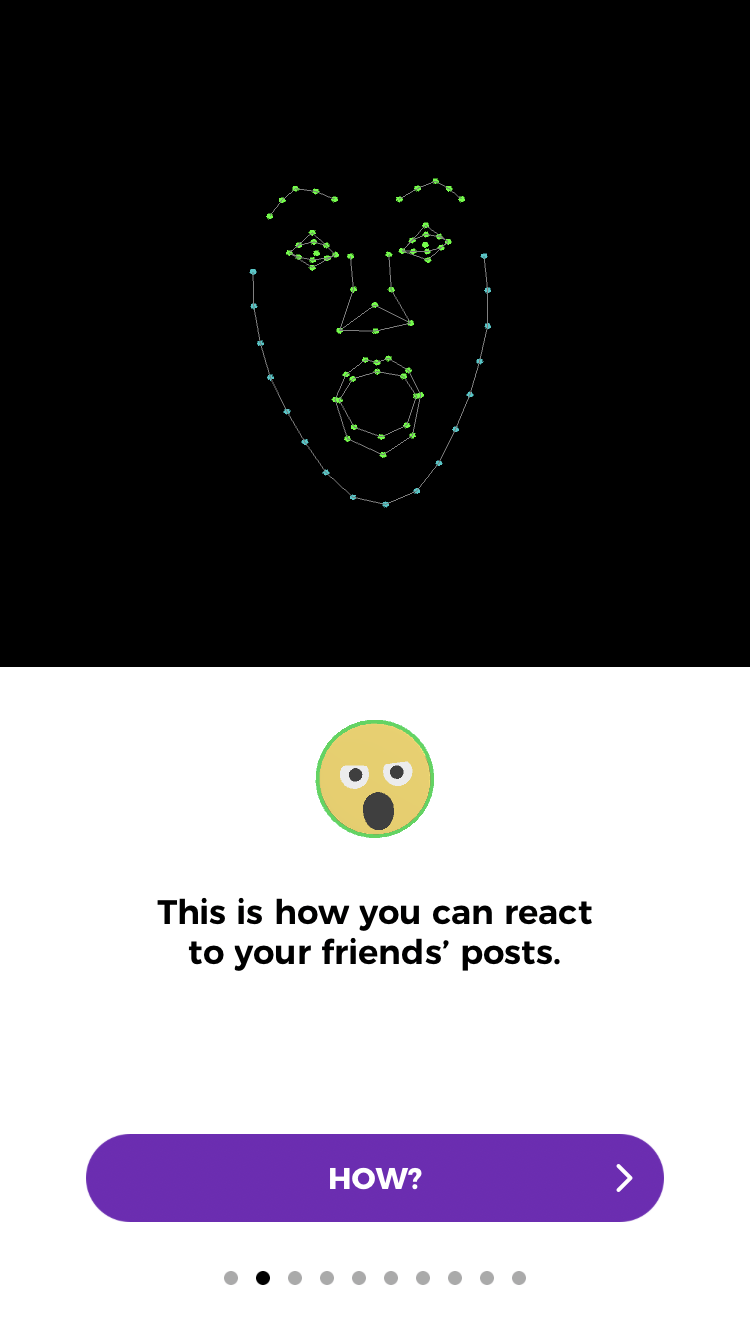

Let’s say you are a big YouTube star and you were streaming from your phone. Wouldn’t it be neat to get real-time feedback on the aggregate emotional response of your viewers? Was that joke funny or a little too off-color? This is a reality on Polygram, a social network that understands human expressions. With high-fidelity face scanning, it is plausible for it to be faster and more accurate than ever before. How about a one-on-one scenario? It could prove to be a useful tool to transcend cultural barriers, where it may be more difficult to translate emotional cues.

Facial expression identification in Polygram

Well, we already do this manually when we select a Facebook reaction to like, or love, or laugh at a post. But there is certainly a UX limit to the number of reaction options Facebook can offer, so we select from a choice few. Sentiment analysis does not share these same limitations and could open the door for a plethora of new, automated reactions as you read. This might be a creepy concept for people at this time, but with every cute Fox Animoji we create we become a little more accustomed to this hyper-accurate face-tracking technology.

While these are fun and relatable topics, what first came to my mind was a BBC documentary I saw about the daily lives of people with Autism disorders. It’s very common for these individuals to have difficulty detecting and expressing emotion. Flashcards with emotions associated with facial expressions are commonly used as a teaching tool. Real-time sentiment analysis could be a much more powerful way to learn while also providing the missing context while FaceTiming. MIT’s Rosalind Picard has been working on this exact solution and he’s received interesting requests from study participants with Aspergers who asked, not for information to improve communication, but for hints on when to “back off.” This is yet another example of how this technology can help people have productive, meaningful conversations.

Blocks and Motion Savvy

The last, and perhaps my favorite, use case concerns a community who is light years beyond the average person’s use of non-verbal communication. A community who almost exclusively uses non-verbal communication: the deaf community. These depth cameras are not only good at tracking faces, but technologies such as Leap Motion have proven to be incredibly accurate at tracking hands as well.

I had my first experience with Leap Motion at our friends over at You Are Here in their immersive experience lab. The demo I saw was called Blocks: it was a simple but powerful example of how fast and accurate their technology was. My experience with Leap Motion on a VR platform was very cool but it was literally “all fun and games.” A more powerful use case is the extent to which this technology can bridge the gap between deaf culture and hearing culture by recognizing sign language. Speech-to-text is already a ubiquitous technology but I was pleased to find that “sign-to-speech” technology exists as well. This is demonstrated well by Motion Savvy. This was made possible by depth information provided by the Leap Motion hardware. It seems to me the iPhone X has the hardware to do this and it is now purely a software effort. It is likely that the iPhone X will become a convenient, portable sign-to-speech translator in the near future. This could be a huge advancement in creating faster feedback loops and more intimate communication between friends and family in the deaf and hearing communities.

By experiencing depth we can increase focus and feelings of privacy by clipping the backgrounds, increase emotional awareness with sentiment analysis, and break down the barrier between sign language and verbal communication. Through these advancements, we start to see the potential the TrueDepth camera has to bring us closer together. I’m not claiming that the TrueDepth camera is powerful enough to unlock all these use cases or that it is even required. However, basically everything we’ve discussed here is available in some form or fashion using depth-sensing technologies today. We are likely only scratching the surface of what is to come and I hope you are all as excited as I am about seeing what the future holds.

I’d love to hear any additional interesting use cases, stories, or comments! Reply below or let me know on our Twitter!

Jon Day

Jon Day